Meta Found Liable for Harm to Children’s Mental Health in New Mexico

New Mexico Jury Finds Meta Liable for Harm to Children

SANTA FE, N.M. — A New Mexico jury delivered a landmark verdict on Tuesday, determining that Meta knowingly harmed children’s mental health and concealed its knowledge about child sexual exploitation on its social media platforms. This decision marks a significant shift in the legal landscape, signaling a growing willingness by the government to hold tech companies accountable for their actions.

The case, which lasted nearly seven weeks, was one of the first major trials involving social media giants and their impact on young users. Meanwhile, jurors in a separate federal court in California have been deliberating for over a week on whether Meta and YouTube should be held liable in a similar case.

The Verdict Against Meta

New Mexico prosecutors argued that Meta, which owns Instagram, Facebook, and WhatsApp, prioritized profits over safety, violating parts of the state’s Unfair Practices Act. The jury agreed with these allegations, finding that Meta made false or misleading statements and engaged in “unconscionable” trade practices that exploited the vulnerabilities of children.

Jurors determined that there were thousands of violations, each carrying a penalty of $375 million. However, this amount is less than one-fifth of what prosecutors had sought. Despite the ruling, Meta’s stock rose 5% in early after-hours trading, indicating that investors may not see the verdict as a major setback.

What the Penalty Means

Juror Linda Payton, 38, explained that the jury reached a compromise on the number of teenagers affected by Meta’s platforms while opting for the maximum penalty per violation. She noted that the jury believed each child was worth the maximum amount, which could be up to $5,000 per violation.

What Comes Next for Meta

Although the jury found Meta liable, the company will not be forced to change its practices immediately. A judge will determine whether Meta’s platforms created a public nuisance and whether the company should pay for public programs to address the harms. This second phase of the trial is scheduled for May.

Meta has already stated it will appeal the verdict, claiming it disagrees with the decision. A spokesperson emphasized the company’s commitment to user safety, stating, “We work hard to keep people safe on our platforms and are clear about the challenges of identifying and removing bad actors or harmful content.”

Other Lawsuits Against Meta

New Mexico’s case is part of a broader wave of litigation against Meta, with more than 40 state attorneys general filing lawsuits. These cases allege that Meta contributes to a mental health crisis among young people by designing addictive features on Instagram and Facebook.

Sacha Haworth, executive director of watchdog group The Tech Oversight Project, said, “For years, it’s been glaringly obvious that Meta has failed to stop sexual predators from turning online interactions into real world harm.” Haworth cited whistleblowers and internal documents as evidence supporting these claims.

How the Case Was Built

New Mexico’s lawsuit, filed in 2023 by Attorney General Raúl Torrez, relied on an undercover investigation where agents created social media accounts posing as children to document sexual solicitations and Meta’s response. The case also highlighted Meta’s failure to fully disclose or address the dangers of social media addiction.

While Meta has not acknowledged the existence of social media addiction, executives at trial admitted to “problematic use” and claimed they want users to feel good about the time they spend on Meta’s platforms.

Legal Protections and Challenges

Tech companies have long been protected from liability for content posted on their platforms under Section 230 of the U.S. Communications Decency Act, as well as the First Amendment. However, New Mexico prosecutors argue that Meta should still be responsible for its role in promoting harmful content through complex algorithms.

Prosecution attorney Linda Singer stated, “Evidence shows not only that Meta invests in safety because it’s the right thing to do but because it is good for business.”

What the Trial Revealed

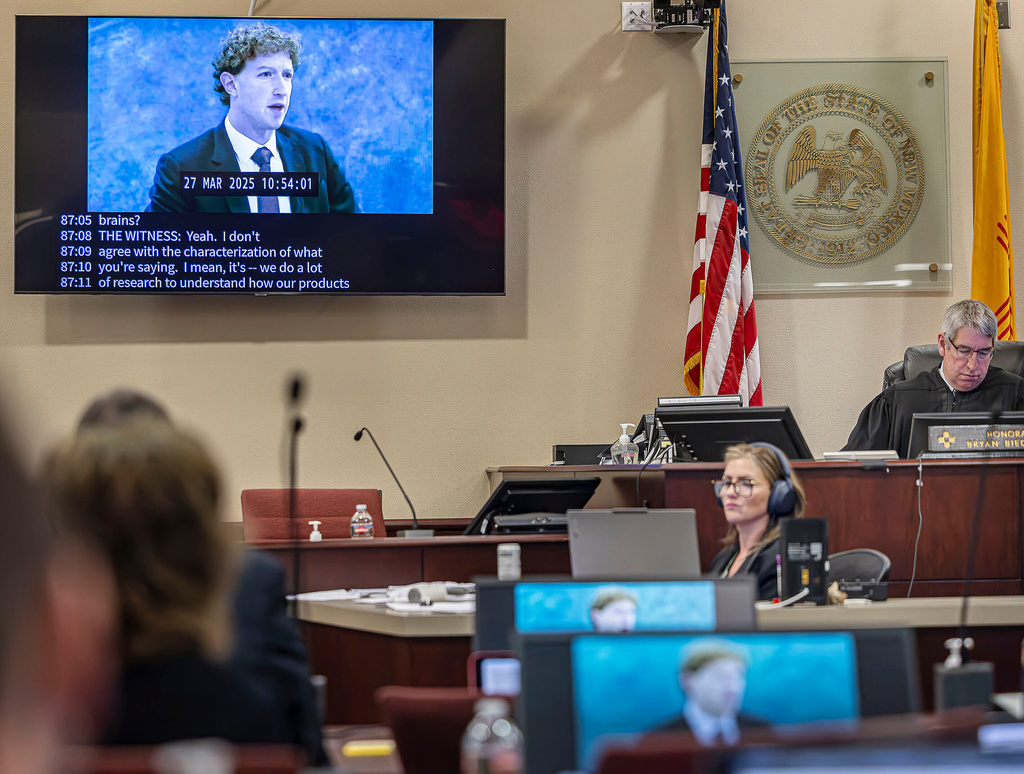

The New Mexico trial examined a wealth of internal correspondence and reports related to child safety. Jurors heard testimony from Meta executives, platform engineers, whistleblowers, psychiatric experts, and tech safety consultants.

Local educators also shared their experiences with disruptions linked to social media, including sextortion schemes targeting children. The jury considered whether Meta misled users with specific statements about platform safety by executives like Mark Zuckerberg, Adam Mosseri, and Antigone Davis.

A Moment for Parents and Advocates

ParentsSOS, a coalition of families who have lost children due to harm caused by social media, called the verdict a “watershed moment.” The group praised the decision as a significant step in the fight to hold Big Tech accountable for the dangers their products pose to children.